ScaNeRF: Scalable Bundle-Adjusting Neural Radiance Fields

for Large-Scale Scene Rendering

SIGGRAPH Asia 2023

(Journal track, selected in technical papers trailer)

Xiuchao Wu1 Jiamin Xu2 Xin Zhang1 Hujun Bao1 Qixing Huang3 Yujun Shen4 James Tompkin5 Weiwei Xu1

1Zhejiang University 2Hangzhou Dianzi University 3University of Texas at Austin 4Ant Group 5Brown University

Abstract

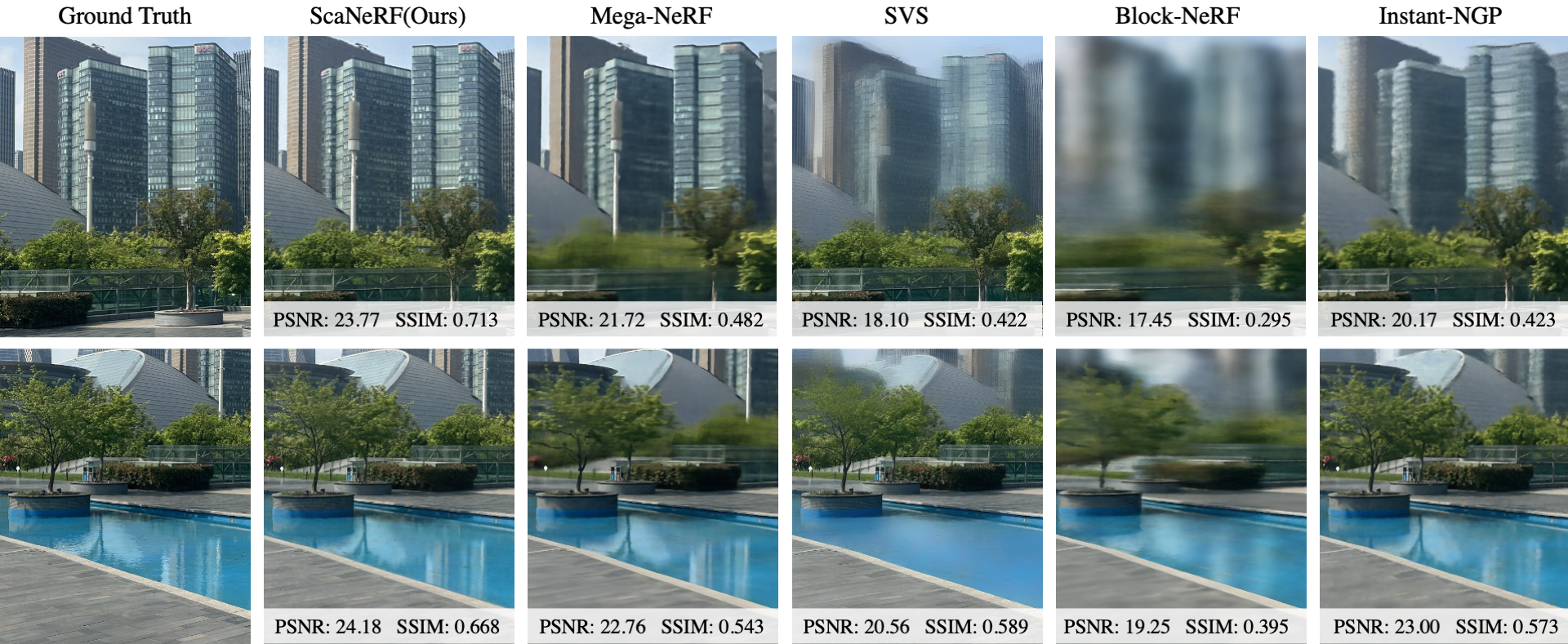

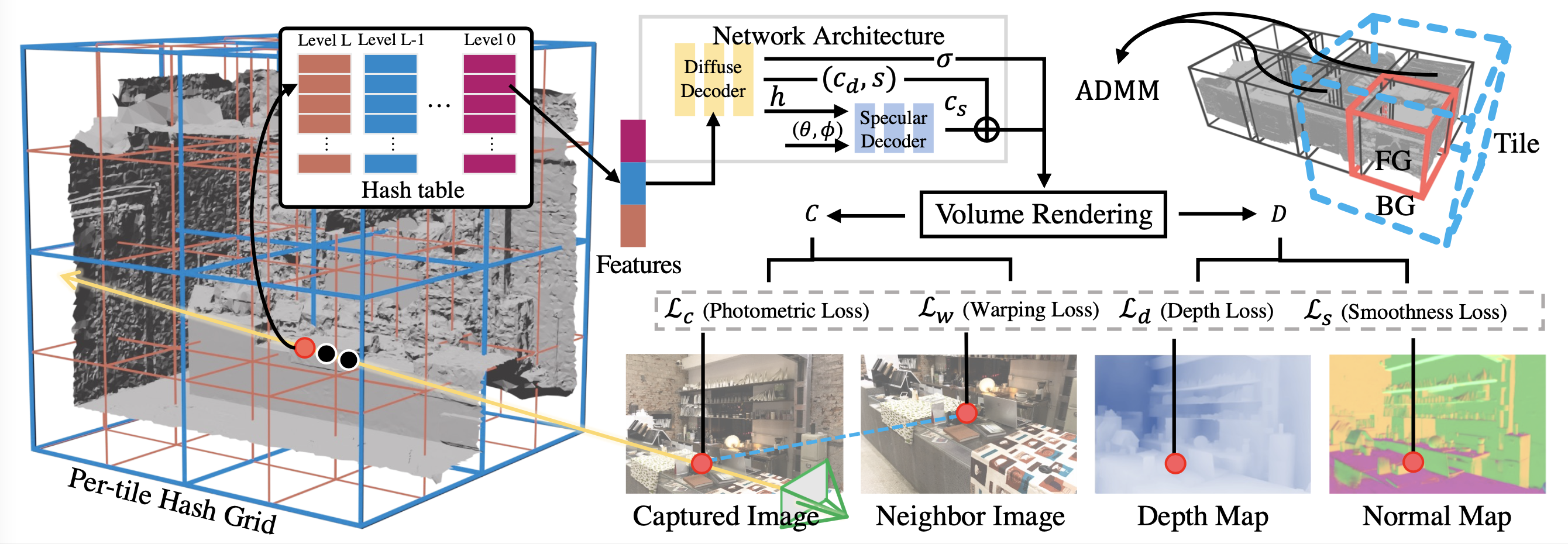

High-quality large-scale scene rendering requires a scalable representation and accurate camera poses. This research combines tile-based hybrid neural fields with parallel distributive optimization to improve bundleadjusting neural radiance fields. The proposed method scales with a divideand-conquer strategy. We partition scenes into tiles, each with a multiresolution hash feature grid and shallow chained diffuse and specular multilayer perceptrons (MLPs). Tiles unify foreground and background via a spatial contraction function that allows both distant objects in outdoor scenes and planar reflections as virtual images outside the tile. Decomposing appearance with the specular MLP allows a specular-aware warping loss to provide a second optimization path for camera poses. We apply the alternating direction method of multipliers (ADMM) to achieve consensus among camera poses while maintaining parallel tile optimization. Experimental results show that our method outperforms state-of-the-art neural scene rendering method quality by 5%-10% in PSNR, maintaining sharp distant objects and view-dependent reflections across six indoor and outdoor scenes.

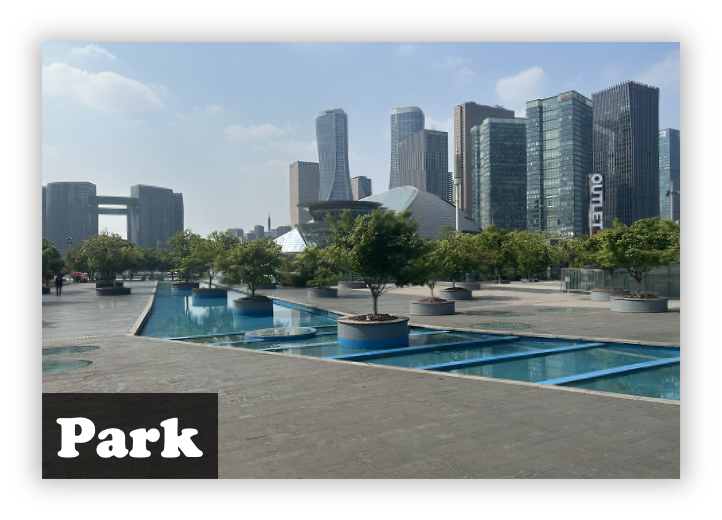

Rendering Results

Method

We address the bundle-adjusting NeRF problem for large-scale 3D scenes by combining a tile-based hybrid neural field scene representation with parallel distributive training via the alternating direction method of multipliers (ADMM).

Rendering Results (Public Data)

We test our method on dataset rubble (from Mega-NeRF) and poly-tech from (UrbanScene3D).

Comparisons

We compare our method to other state-of-the-art novel view synthesis methods on indoor and outdoor scenes

Full Video

Download

@article{wu2023scanerf,

title={ScaNeRF: Scalable Bundle-Adjusting Neural Radiance Fields for Large-Scale Scene Rendering},

author={Wu, Xiuchao and Xu, Jiamin and Zhang, Xin and Bao, Hujun and Huang, Qixing and Shen, Yujun and Tompkin, James and Xu, Weiwei},

journal={ACM Transactions on Graphics (TOG)},

year={2023}}

Acknowledgements

Supported by Information Technology Center and State Key Lab of CAD&CG, Zhejiang University.

Thanks to Chi Wang for providing the place (community data) for shooting data.